AI won’t make you dumb, but it won’t make you stronger either: Talking about brain-protecting AI learning methods

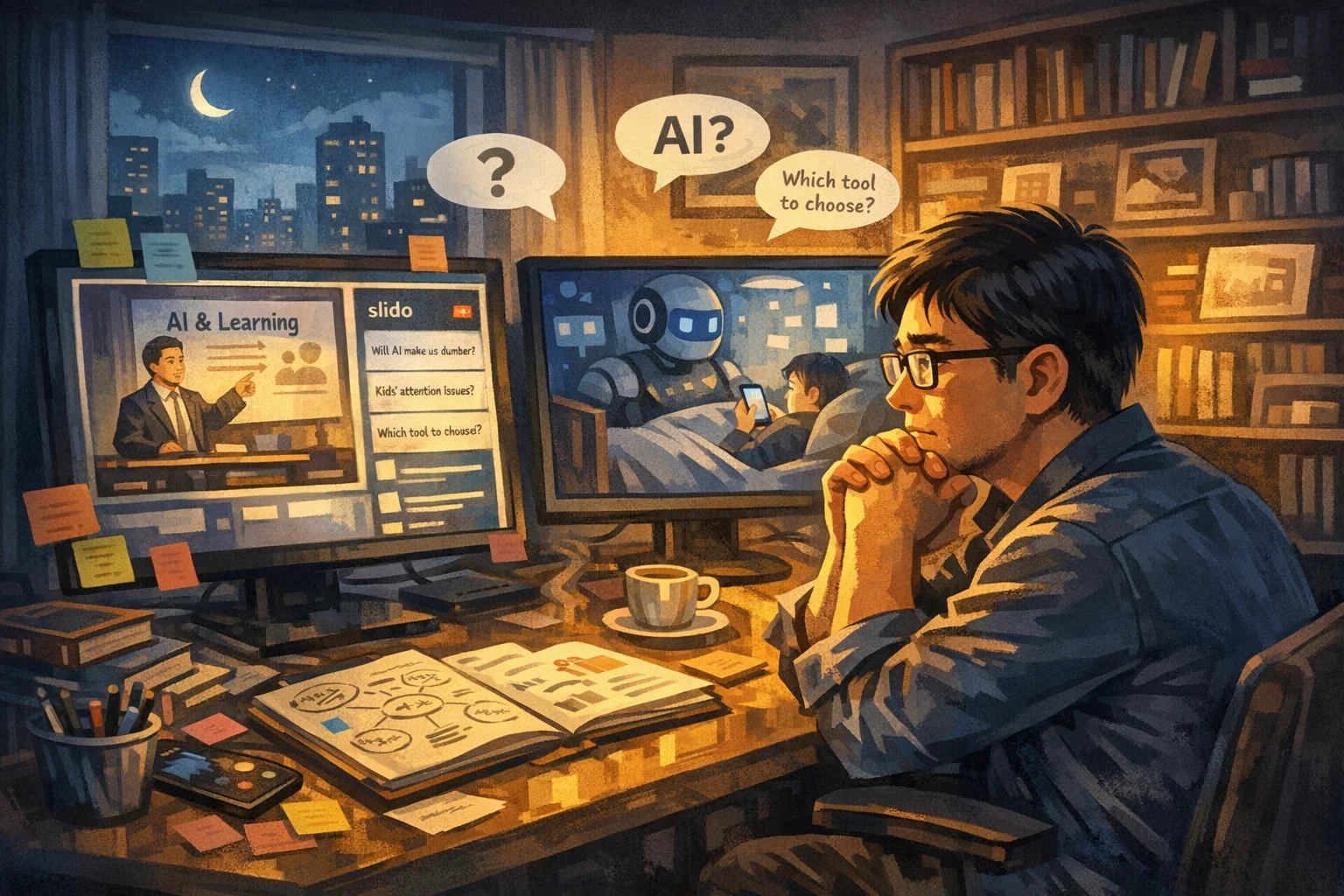

Last night, I actually sat in front of the screen with a very complicated mood.

I say it was complicated, not because I was anxious, but because I was listening to this sharing in two identities at the same time. One is that I have been a content teacher and corporate consultant for more than ten years. I am used to looking for structure in every paragraph, dismantling the framework, and thinking about how this point of view can be transformed into something that my readers can use. The other is an ordinary adult who also scrolls on his mobile phone late at night and feels a little uneasy about the speed of AI development.

I think hundreds of friends online probably have similar dual emotions.

Questions pop up on Slido one after another, each like a small mirror reflecting the collective anxiety of our time: What should we do if our children’s attention is stolen? Will AI make people stupid? Do you still need to learn the language? There are so many tools, which one should you choose? How to prevent children from wanting ready-made answers?

I am all too familiar with these issues. Because in my classes, at corporate training sites, and in the “AI is easy to use” club, I am asked similar questions almost every week. However, I suddenly realized one thing - the answers to these questions may not be in the tools, but in a lower level place.

My good friend, who is also the principal of Wei Ge International School Li Haishuo, what he was really talking about last night was not the skills in using AI tools, but a more fundamental proposition: In an era when information is readily available, how do we redefine “learning”?

This question hit me.

An unpleasant truth that must be faced

From the beginning, President Li Haishuo went straight to the core. He said the problem with the No. 1 vote on Slido wasn’t which model to use, but a lack of focus. However, he immediately added another point - before talking about attention, there is a more fundamental thing that needs to be made clear.

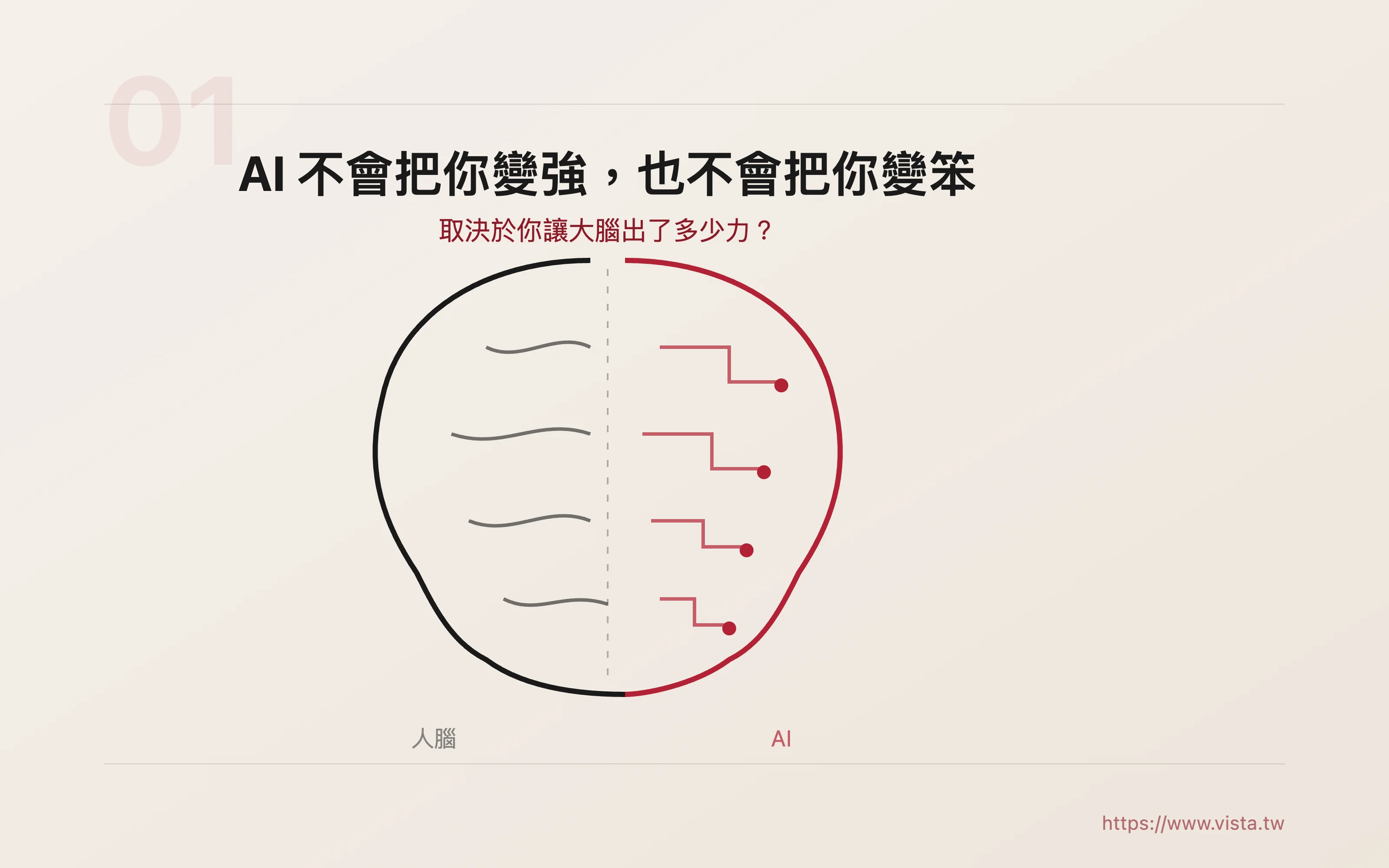

“If the way you use AI is wrong, the more you use it, the dumber you are likely to become.”

▲ AI will not make you stronger, nor will it make you dumb - the key lies in how you use it

▲ AI will not make you stronger, nor will it make you dumb - the key lies in how you use it

He cited a research result: A very high proportion of people who handed over tasks to AI 100% could not remember what they had just done after completing it. The reason is not mysterious, even simple - you took away the answer, but your brain did not exert any effort, so no traces were left. No memory, no muscle, no growth.

When I heard this, a very vivid picture came to my mind.

In the past few years, when I was leading corporate training, I often saw a phenomenon: trainees were very excited to learn to use ChatGPT or Claude to write plans, make briefings, or reply to letters. The quality of output has indeed improved, and the speed is several times faster. But when I asked, “What do you think is the core strategy of this project? Why did you choose this cutaway?”, many people were stunned.

It’s not that they are unintelligent, but their brains are not really involved in the process.

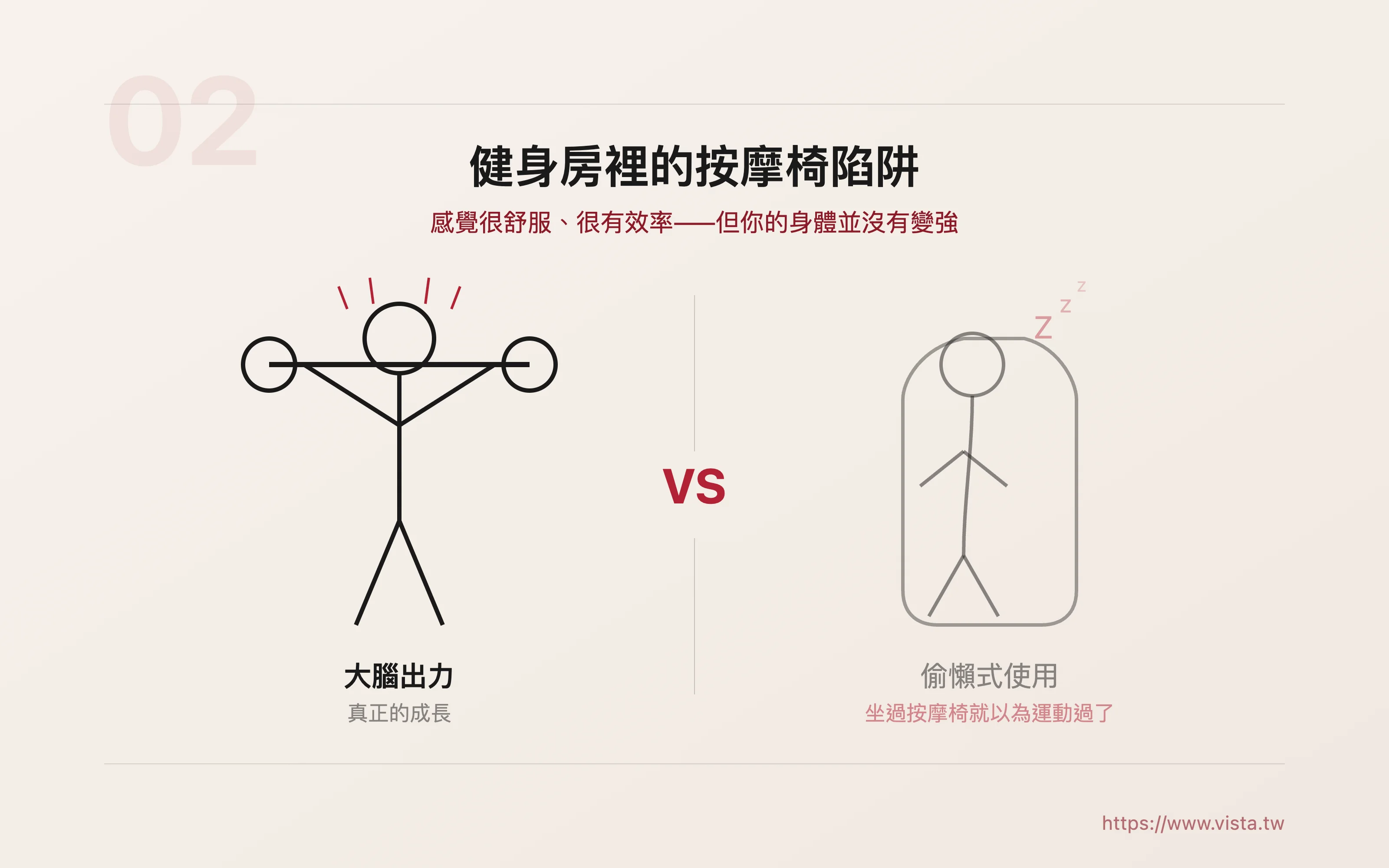

▲ Using AI is like sitting on an electric massage chair in the gym

▲ Using AI is like sitting on an electric massage chair in the gym

The president used an analogy, which I think is accurate: Many people now use AI, which is like sitting on an electric massage chair in the gym. After pressing the button, they say to their friends, “I had a blast today.” You feel good about yourself, you feel good all over, you feel like you’re efficient, but God knows, your body isn’t getting stronger!

This metaphor reminds me of something I often tell students in class: Tools are used to amplify your abilities, not to replace your thinking. If you let tools replace thinking, then what you are amplifying is not your ability, but your dependence.

▲ Cognitive offloading and false mastery: two invisible killers that quietly shrink the brain

▲ Cognitive offloading and false mastery: two invisible killers that quietly shrink the brain

He then mentioned two deeper consequences. The first is the decline in critical thinking, which has the greatest impact on children under the age of sixteen. If you go straight to the answer every time, the neural circuit in the brain that judges the quality of the answer will never be exercised. The second is the deskilling of professionals: they perform well when AI is present, but fall flat once the tools are unplugged. He used the analogy of pilots’ over-reliance on autopilot, which I found very vivid.

“It’s not that you can’t use it, it’s that you can’t hand it over completely.”

This statement sounds gentle, but it actually puts the responsibility back on each of us. AI is not to blame, the real problem is the lazy ways we choose to use it.

Restrictions are the most stable foundation

Next, the principal mentioned a thinking framework that made my eyes light up. He said that a habit he learned at Minerva University is to look for constraints first when facing any problem, that is, restrictions.

Many people frown when they hear “restriction” and think it is an obstacle. But what’s interesting is that his statement is very beautiful: In an era of change and instability, if you can find truly stable constraints, those constraints will become your foundation.

When it comes to learning, what are the most stable constraints?

It’s brain science.

“The brain is like a muscle, use it or lose it. If you keep letting AI help you carry away your strength, your brain will be like a person who rides in the elevator for a long time, and your legs will become weaker and weaker.”

This metaphor is great because it simplifies all the debates about “whether AI is good or not” into a very specific and very operable criterion: does your brain work hard when you use AI?

I have been emphasizing similar concepts in my teaching site. I often tell students that when writing an article, don’t throw the entire topic to AI from the beginning and let it help you generate a finished article from scratch. You have to think first, even if it’s just writing three keywords on paper and drawing a rough structure, that’s fine. Because that “think first” action is your brain doing the work. With that contribution process, no matter how AI helps you polish, supplement, or optimize the article later, your own soul will be in that article.

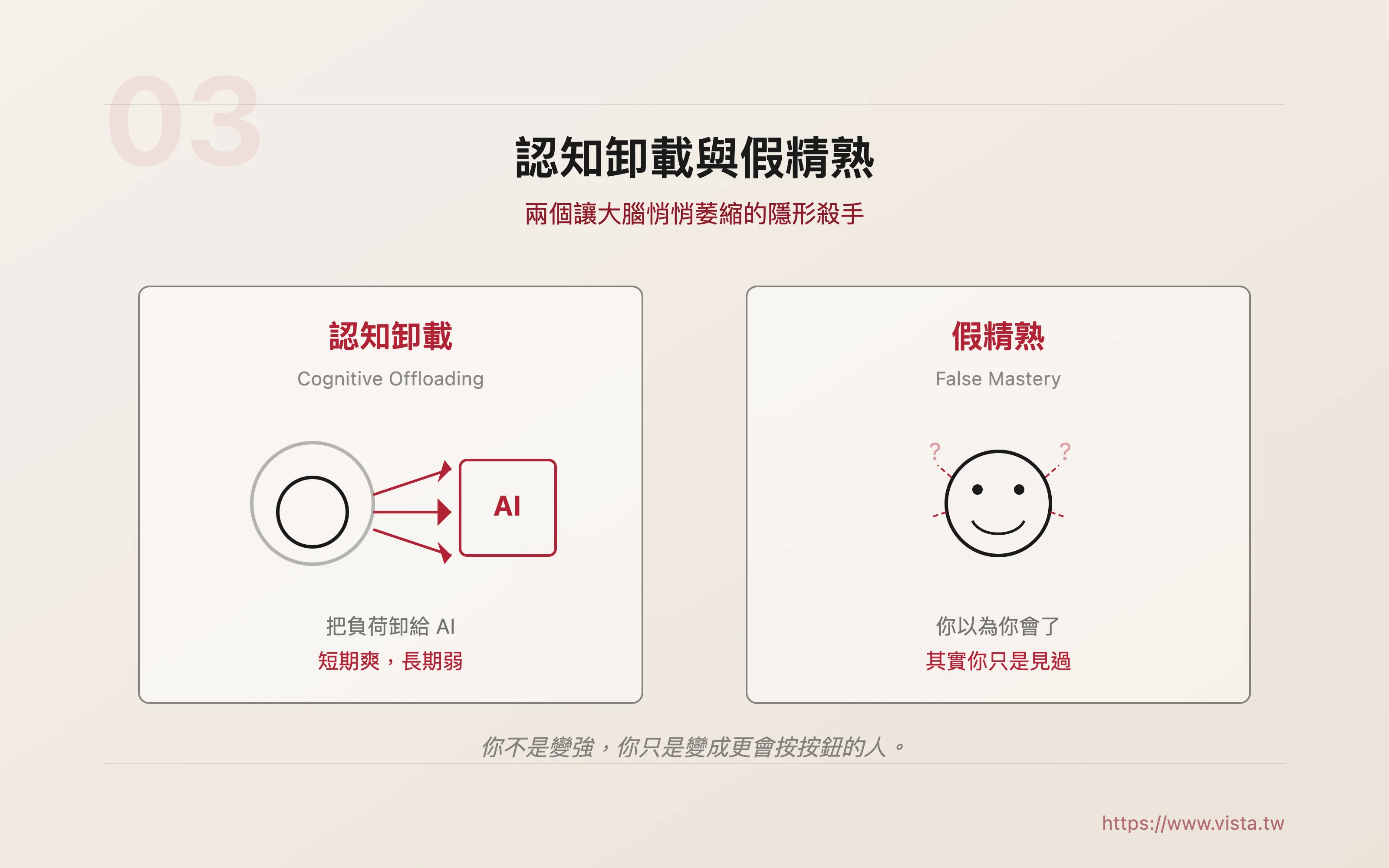

The principal brought out two key concepts here, which I think everyone who uses AI should remember.

The first one is called Cognitive Offloading. That means you offload the load that should have been done by the brain to AI. It’s great in the short term, but it will weaken in the long term.

The second one is even more terrifying, called fake proficiency. You thought you knew it, because AI did it for you. You look at the finished product and really think “I understand”, so you stop trying. But actually you don’t understand, you’ve just seen it.

False proficiency doesn’t just happen to children, adults fall prey to it more often. You wrote a beautiful plan, created a professional brief, and responded with a well-written letter—but do you really know why you wrote it this way? Will you still be able to write without AI help next time?

If the answer is “not sure,” then you’re not getting stronger, you’re just becoming a better button-presser.

Level 0: Get your attention back

▲ Attention is not the first level, it is the zeroth level - it is BASE

▲ Attention is not the first level, it is the zeroth level - it is BASE

The principal said something next, which made me feel that the structure of the whole scene became clear instantly. He said: Attention is not the first level, it is the zero level. It’s BASE.

If you don’t pay attention, the Socratic method, spaced repetition, and retrieval exercises mentioned later are all just words on paper.

He divided the enemies that threaten attention into three, which I think can be called the “triple curse of modern learners”: loss of control of dopamine (the addiction to stimulation brought by mobile phones and short videos), AI’s cognitive offloading (getting results without thinking), and false proficiency (thinking you have made progress but you haven’t).

Taken individually, these three things are already very powerful, and together they form an almost perfect trap: you are distracted, do not use your brain, and mistakenly think that you are making progress.

As a person who has been doing content production for a long time, I have a very personal experience of “attention being stolen”. Writing an article requires deep concentration, and deep concentration requires an uninterrupted period of time. But the pace of modern life is almost designed to interrupt you. Every notification, every push, every red dot competes with your attention for resources.

▲ Five attention-saving strategies: from breathing techniques to environmental design

▲ Five attention-saving strategies: from breathing techniques to environmental design

So when the principal began to share his “emergency strategies,” I listened very carefully.

The first trick he mentioned was forced stillness: stay for fifteen to thirty minutes every day, do nothing, just be in a daze. This sounds counter-intuitive, but I understand his logic - when your nervous system has been overstimulated to a point, you need to bring it back to a neutral state before everything else is possible.

The second trick is Box Breathing, which is the four-four-four-four breathing method: inhale for four seconds, hold for four seconds, exhale for four seconds, hold for four seconds again, cycle. I like this method very much, because it is not the kind of moral persuasion that “you must have self-control”, but directly uses physiological mechanisms to bring you back from an excited state.

The third trick is HIIT (high-intensity interval exercise): just ten to fifteen minutes. If you can’t calm down, use exercise to reset your brain. He even thoughtfully mentioned a “No Jumping” version so people living in apartments can do it.

The fourth tip is environmental design, the most typical one is the mobile phone lock box. I particularly agree with this. We are too accustomed to “trusting self-control”, but self-control is a resource that will be depleted. Replacing willpower with systems and environment is a more mature approach. When I am writing a long article, I will deliberately put my phone in another room, not because I have poor self-control, but because I don’t want to waste my willpower on whether to look at my phone or not.

The fifth tip is to go back to pen and paper. Pen and paper still work, he said with great conviction. I totally agree. The value of paper and pen is not nostalgia or romance, but “slowness”. Slow down and you can really see what you’re thinking. In this world where everything is accelerating, slowness is a scarce ability.

I have a habit: before imagining any important article or course, I will definitely take out my notebook and pour out my ideas in longhand. The process was slow and messy, but it was the messiness that gave me the opportunity to see the connection between ideas and what I really wanted to say. If I just type on the keyboard or talk directly to the AI from the beginning, I will easily be carried away by the smoothness of the tool and lose my own rhythm.

Brain-protecting AI learning method: a beautiful closed loop

▲Brain protection triangle: people first, then opportunities, Socratic guidance, and cognitive monitoring later

▲Brain protection triangle: people first, then opportunities, Socratic guidance, and cognitive monitoring later

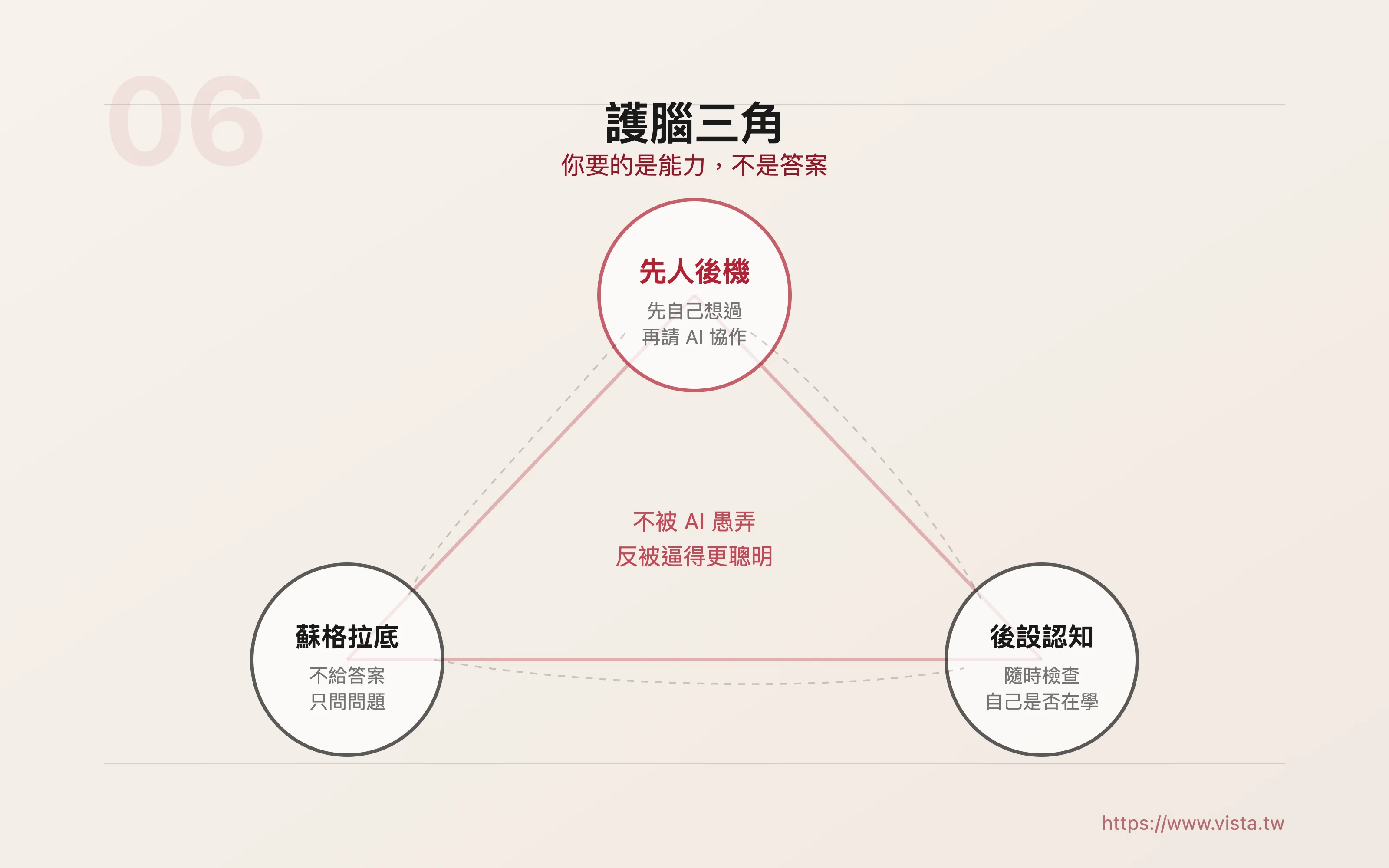

After the zero layer was stabilized, the chief principal began to talk about the real core issue: How can we use AI to not harm the brain, or even make the brain stronger?

He gave three strategies, and after listening to them, I felt that they just formed a very beautiful closed loop.

The first strategy is “people first, opportunities later.” You must think about it yourself before asking AI to collaborate. This is not a slogan, this is a bottom line. He specifically named one scenario: letting the AI write an entire composition first, and then letting the children “reference” it. This is the worst way. Because children’s brains will be preoccupied by AI’s narratives and expressions, it will be difficult to avoid being affected by what they write later. A better approach is to first let the children write down their thoughts with pen and paper, no matter how rough or incomplete they are; then take photos or post them to the AI, and ask the AI to play the role of a coach, giving suggestions, corrections, and feedback.

This principle applies to adults as well. When I was teaching a writing class, what I was most afraid of was that the students would just throw the question to the AI. After getting a finished manuscript, they would feel that “the task is completed.” That’s not learning, that’s outsourcing. Real learning is when you struggle and think first, and then AI helps you see the blind spots you didn’t see, and helps you polish your rough ideas to be more precise. That process is valuable.

The second strategy is “Socratic facilitation.” He mentioned that Alpha School uses AI as a Socratic tutor. It does not give answers directly, but uses a series of questions to guide learners to think and discover on their own.

I think this concept is very important. Because the way most people use AI is like using a vending machine—put a coin in, push a button, and take away the item. But if you set the AI to “not allowed to give me answers directly, but can only ask me questions and guide me to find the answers myself,” the quality of the entire learning will be completely different. You’re not buying the answers, you’re getting the training.

When I am preparing lessons, I sometimes deliberately use this method to talk to AI. For example, I am preparing a course on content strategy. Instead of asking, “List the ten key points of content strategy for me,” I will say, “Suppose you are a student who doesn’t know anything about content strategy. What questions would you ask me?” Then I try to answer those questions. This process forces me to reorganize things that I thought I knew very well, and I can often find some loopholes that I didn’t notice in the past.

The third strategy is “metacognitive monitoring.” That is, check yourself at any time: Am I studying now?

A true learner is always asking himself these questions: What was I talking about just now? How does this paragraph connect to the previous paragraph? Is my inference reasonable? Did I really understand it, or did I just remember the superficial words?

The principal said it very bluntly: It is not easy to significantly improve IQ, but metacognition can be trained. And a person with good metacognitive ability will learn everything faster than others, because he will always know where he is stuck and where he needs reinforcement.

Taken together, these three strategies—think for yourself first, use AI to guide you, and then monitor yourself—like a solid tripod. When you stand on it, not only will you not be fooled by the AI, but you will be forced to become smarter.

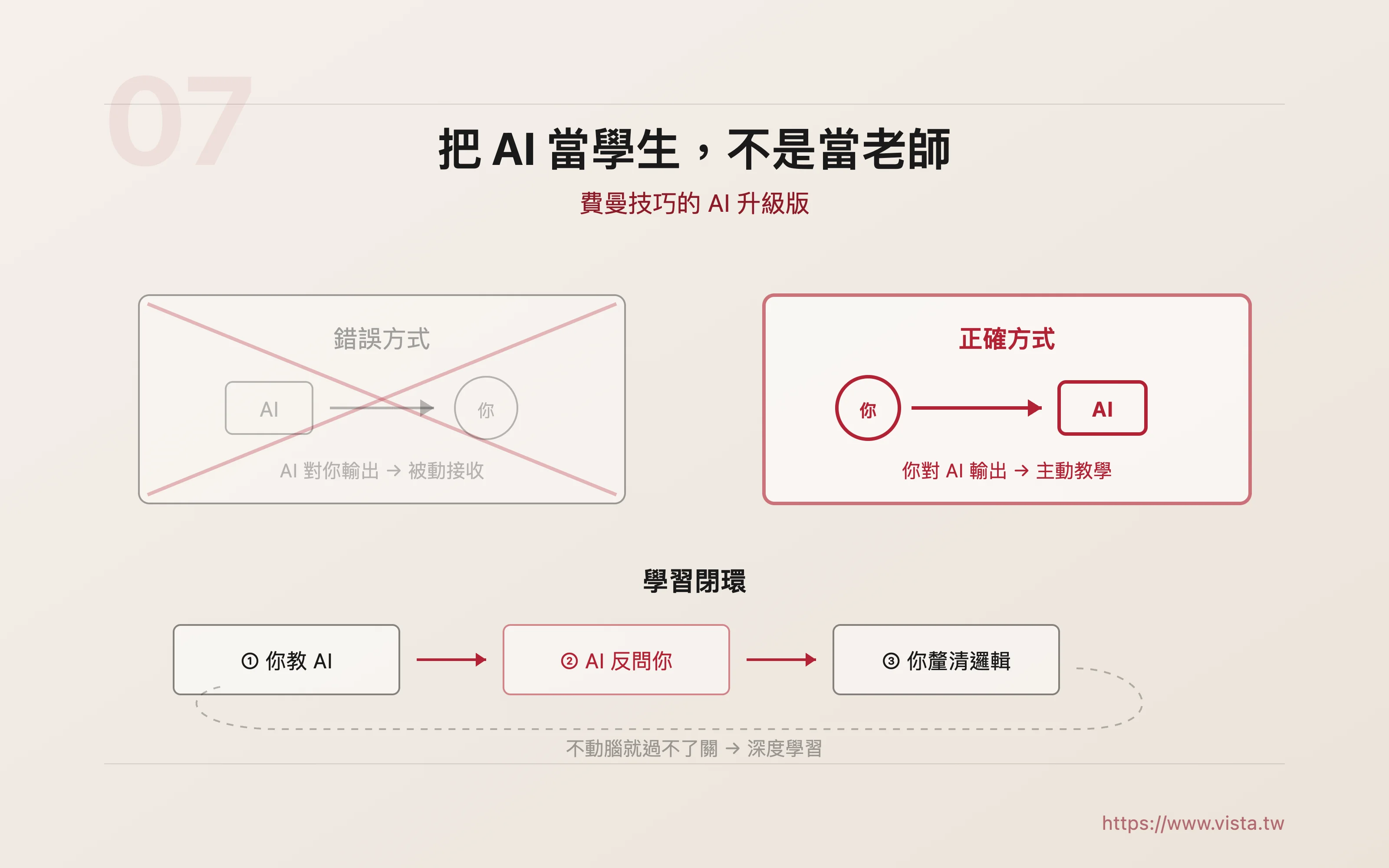

Treat AI as a student, not a teacher

▲ Treat AI as a student, not a teacher

▲ Treat AI as a student, not a teacher

In the whole sharing, there is one sentence that I think is the most practical. The principal said:

“Don’t let the AI output to you, let you output to the AI.”

This means: Instead of letting AI act as a teacher and you as a student passively receiving it, it is better to do the opposite - you act as a teacher and treat AI as a student to teach it. After teaching, let the AI ask you questions, test you, and force you to explain the knowledge clearly.

Having said that, this is actually an AI upgraded version of Feynman’s technique. Feynman said that if you can’t explain something clearly in simple language, it means you don’t really understand it. Now with AI, you have a practice partner who will never get impatient and can act as a student at any time.

The most powerful thing about this method is that you don’t need willpower to remind yourself to use your brain. Because you designed the process from the beginning so that “you can’t pass the test if you don’t use your brain.” You must organize language, clarify logic, and answer rhetorical questions—these processes themselves are deep learning.

When I write a book or column, I occasionally use a similar method. I would post a draft of a paragraph to the AI, and then say: “Suppose you don’t understand this field at all, what questions do you have after reading this paragraph?” The questions raised by the AI often point out my blind spots: the background knowledge that I thought the reader would understand actually needs one more step of explanation; the logical jump that I thought was clear was actually missing a link in the middle.

This is the power of treating AI as a student. In other words, you are not consuming the output of AI, you are using AI’s feedback to improve your thinking.

Win with data: What AI can best help you with is to see your blind spots

▲ Harry Zhou: AI is your mirror, it can see your blind spots

▲ Harry Zhou: AI is your mirror, it can see your blind spots

In the second half of the lecture, the chief principal talked about a more advanced concept, but I think it is particularly valuable for adults.

He said that the key to AI competition is not computing power or models, but data. To be more precise, it is not general data, but data that can generate “personalized insights.”

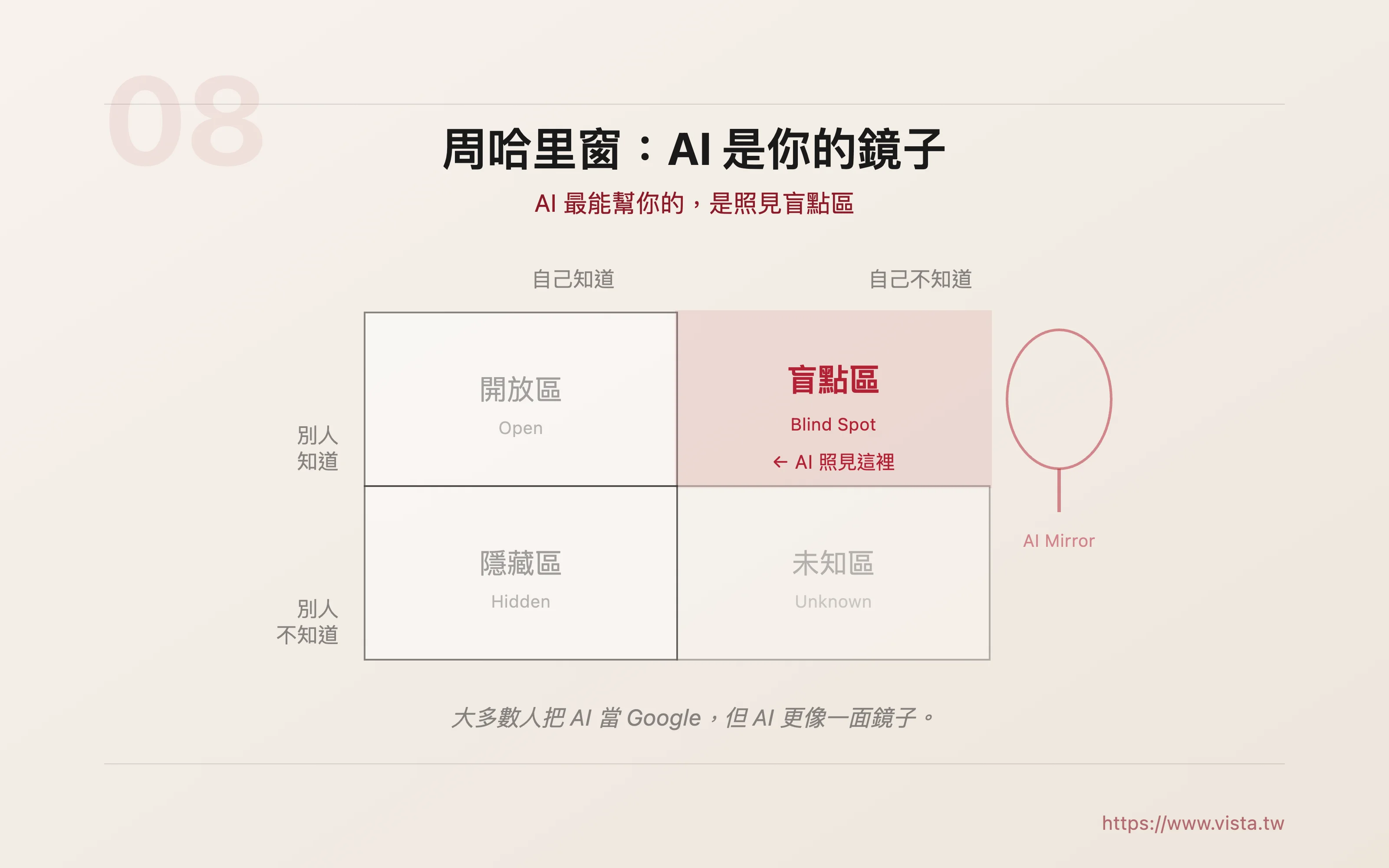

He borrowed the concept of the “Zhou Harry Window” from psychology to illustrate: Everyone’s self-perception can be divided into four quadrants - the open area (what you know and others know), the hidden area (what you know but others don’t know), the blind spot area (what you don’t know but others can see), and the unknown area (neither party knows).

And where AI can help you most is precisely in that blind spot area.

This idea made me think about it for a long time. Because most people think of AI as an upgraded search engine—I ask it questions and it gives me answers. But search engines can only answer questions you can “ask.” The essence of the blind spot area is: you don’t know what you don’t know, so you can’t ask that question at all.

The great thing about AI is that if you are willing to provide enough context and materials, it can be like a mirror, reflecting angles that you are unable to see due to inertia. It can point out jumps in logic in your article, narrative blind spots in your brief, or risks that you failed to consider in your plan. But the premise is that you must be willing to “feed” it enough materials, and you must have the magnanimity to accept what it creates.

The principal proposed two very practical strategies. The first is to build an AI that “knows you”: continue to use the same AI and let it accumulate your context, your preferences, and your thinking patterns. The second is to establish your “anchor knowledge”: use the things you are most familiar with and feel the most to anchor new knowledge.

We can all borrow this approach directly. Just think, the things you deal with at work every day may not be what you are most passionate about, but they are almost certainly what you are most familiar with. Use it as an anchor to connect new knowledge and skills, your memory will be stronger and your application will be faster.

Sense of meaning: In the AI era, the thing that cannot be automated the most

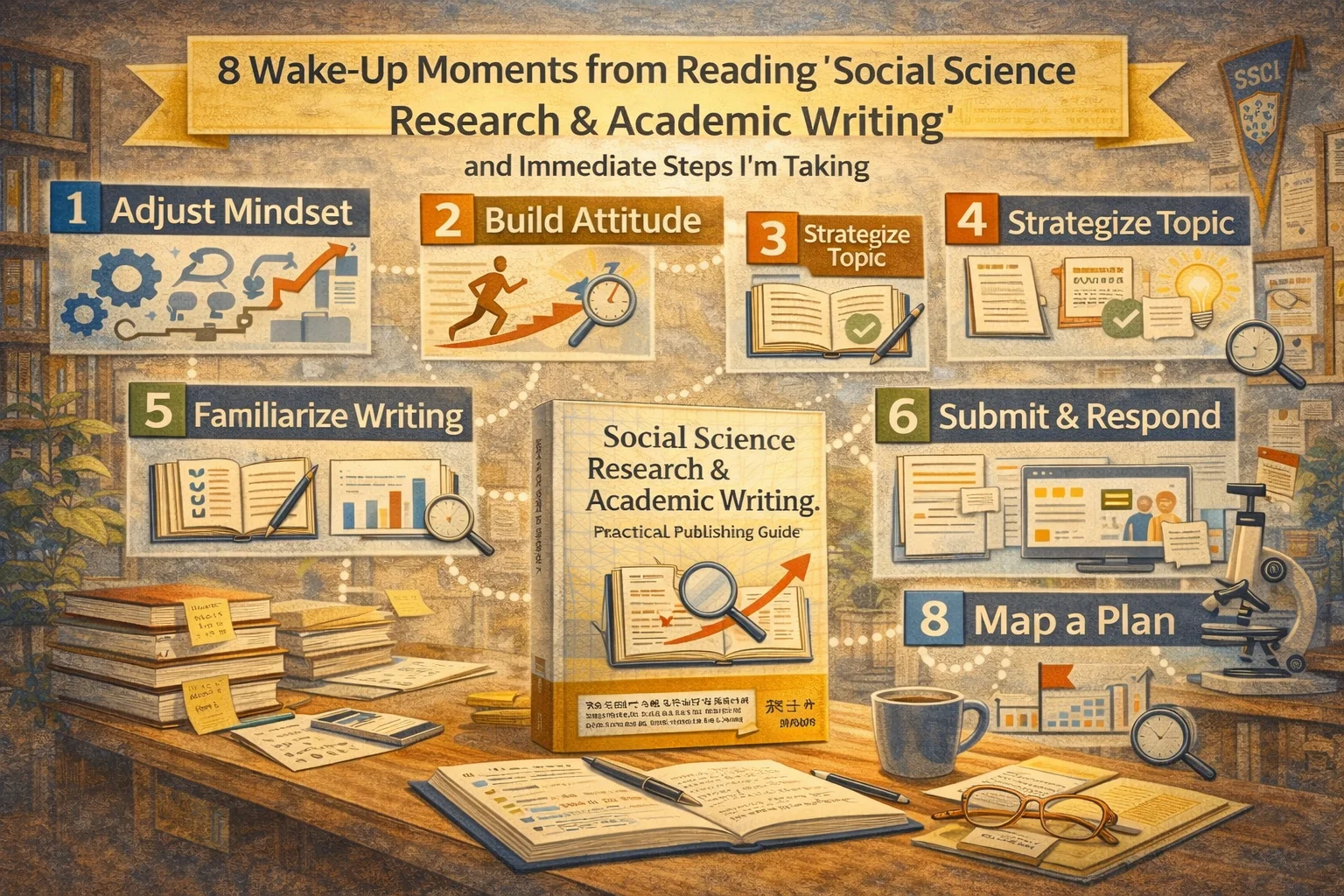

The chief principal mentioned Tim Ferriss’s DISS learning framework in the middle: Deconstruction (dismantling skills), Selection (finding the critical 20%), Sequencing (arranging the learning sequence), and Stakes (designing motivations and consequences).

You may have heard of the first three, but he emphasized that the most important one is the fourth: Stakes - a sense of meaning.

I think this points to the deepest dilemma of modern people. It’s not that we don’t have resources, tools, or information. What we lack most is motivation. AI makes “can do” too easy, but makes “why you need to do it” more important and more ambiguous.

“Humans must find a sense of meaning, otherwise AI will do everything for you and you won’t know where to go next.”

This reminds me of my own experience. I have been writing articles for more than ten years, and sometimes I ask myself: Now that AI can write smooth articles, make beautiful briefings, and produce professional analysis reports, what else should I write? Where is my value?

Later I figured it out. AI can write the correct article, but it can’t write “my” article. It can organize information, sort out logic, and polish text, but it does not have my fifteen years of teaching experience, the real dilemmas I have observed at every company site, and the experience I have accumulated after interacting with each student. Those things are the source of my sense of meaning, and they are also the core that AI cannot replace.

So every time someone asks me “Will AI replace writers?”, my answer is: AI will replace writers who are just transferring knowledge, but it will not replace those who have their own opinions, their own temperature, and their own “why they write.”

Your sense of meaning is your strongest moat in the AI era.

Three things I took away

Thank you to the President for this wonderful lecture, and I am also happy to see the two sons of the President giving their personal opinions. Although I am single and have no children, I am really happy to recommend to you my friend’s online course “The Chief Principal’s AI Education Guide | Master the smart learning skills necessary for the next 30 years! 》.

After listening to this lecture last night, I wrote down three strategies in my notebook, which serve as my own blueprint for action.

First, save attention first, and then talk about other things. Attention is layer zero, the foundation of everything. Use breathing techniques, exercise, environmental design, and writing with pen and paper every day to bring your nervous system back to a neutral state. Don’t imagine relying on willpower.

Second, turn AI from a substitute player into a coach. Use “people first, then machine”, “Socratic guidance” and “post-cognitive monitoring” to form the brain protection triangle. What you really want is the growth of ability, not a beautiful finished product.

Third, use AI to make your own tools. The best use of AI is to systematize your problems and create tools that can be used repeatedly. Instead of letting AI solve problems for you, let AI help you build a system to solve problems.

Write at the end

Actually, I sat at my desk for a long time last night.

The screen has gone dark, but my mind is still spinning. What I am thinking about is not just those frameworks and strategies, but a bigger question: In this era when AI has caught up with us, what posture do we want to take moving forward?

I thought about it for a long time and finally came to a very simple conclusion.

AI is like a new terrain. If you can’t escape, you have to learn to walk on it. And walking depends not on more amazing models or more expensive tool subscriptions, but on a few very basic things: Can you get your attention back? Can you make your brain work every time you use AI? Can you turn learning into a sustainable competency system? Can you turn life’s dilemmas into tools and chaos into strategy?

If I were to sum up the inspiration that night brought to me in one sentence, I would say:

“AI will not make you stronger, nor will it make you dumb. What it will turn you into depends on what you let it do for you, and how much you contribute.”

And the choice to contribute is always in your hands.

Further reading

- When AI Becomes My Editor: How a creator used AI to revive content plans that had been shelved for years

- A brief discussion on the creator economy feat. Diao Siyu, co-founder of AnyoneLab

- Build a personal brand: Make good use of content marketing thinking to run your blog

- Before you start writing, develop a content strategy